Listen now

In case you missed our last blog on cognitive robotics, here is a quick recap: AI has become the main engine for robotics progress. The center of gravity is shifting from hardware to software, as standardized autonomy stacks, data pipelines, and foundation models define what modern robots can do.

The inflection point is starting to be visible in the real world: Amazon has deployed over a million robots all the while developing their AI foundation model said to improve their fleet efficiency by 10%; Mercedes-Benz, BMW, and Toyota (among others) are already testing humanoids on their assembly lines; and a research group including Google DeepMind Robotics have found a way to make eight robotic arms work together seamlessly. However, many of the most exciting advancements are still only being tested outside of operational environments. Turning lab breakthroughs into deployable systems requires solving problems across the entire stack, which is much easier said than done.

Inside the robotic AI stack

On a simplified level, the robotic AI stack can be seen as laddering up from physical components towards actual tasks and the user. At the foundation sits the hardware—sensors, actuators, compute, power, and connectivity, which is largely commoditized today for traditional industrial robots. The real differentiation happens in the software layer above, where raw hardware transforms into intelligent behavior through the autonomy stack: perception systems that make sense of messy real-world data, planning algorithms that navigate constraints and uncertainty, and control systems that translate commands into precise physical actions.

What makes modern cognitive robots fundamentally different is how these components now integrate with foundation models that act as the higher-level policy for context understanding and continuous learning. Latest-version generalized robots leverage both vision and large language models to understand instructions and adapt to new scenarios, powered by a sophisticated data backbone that continuously ingests information from training, demonstrations, simulations, and fleet-wide operations.

At the system level, individual robots become nodes in broader orchestrated operations. Fleet management handles coordinating multiple units, while human-robot interaction brings the end-users into the mix. Together, these layers transform individual machines into adaptive, coordinated systems.

Where the bets diverge

There are different approaches to building and connecting these components in an actual robotic system. These decisions ultimately determine who ships first and who builds lasting moats.

Data flows tailored to the use case: Data strategies in improving and training models are becoming a key competitive edge. Human demonstrations excel at capturing complex, nuanced behaviors for tasks that are difficult to specify algorithmically. Simulation environments offer unlimited, safe training scenarios and enable systematic exploration of edge cases without physical constraints. Existing datasets and pre-trained models bring broader world knowledge and visual understanding developed on internet-scale data. Some invest heavily in comprehensive simulation environments or large pre-demonstration datasets, while others take a minimal pre-training approach and rely primarily on operational learning. However, for multimodal enterprise robotic systems, the real bottleneck is not synthetic or internet-scale data, but high-quality, task- and environment-specific interaction data from robots operating in the field. The integration of real-world operational data is critical: as robots operate in the field, every interaction becomes a training signal that can improve performance across the entire fleet, turning the scale of data into a competitive advantage regardless of the initial training strategy.

The intelligence boundary question: One of the strategic decisions companies face is where to draw the line between traditional rule-based control and AI-powered decision making. Conservative approaches reserve AI for high-level tasks like path planning and object recognition, while keeping low-level motor control and safety systems deterministic and predictable. Other companies are pushing AI deeper into the stack, using neural networks for everything from perception to motor control. Rule-based systems offer predictability and easier debugging, while end-to-end learning promises better performance and generalization, but with much more training required and less interpretability when things go wrong.

Modular specialists versus generalist models: The industry is split between two architectural philosophies that mirror broader AI development trends. The modular approach uses specialized models for each capability—separate networks for vision, grasping, navigation, and task planning—allowing companies to unlock individual capabilities quicker, optimize each component independently, and swap out modules as better versions become available. The alternative is the emerging field of Large Behavior Models (LBMs), which attempt to learn all robotic behaviors end-to-end in a single massive network. Companies pursuing this path—many still in lab environments—argue that true intelligence emerges from the interaction between different capabilities, not their separation.

The approach companies take depends heavily on their use case, constraints, and maturity. Environments, tasks, form factors, and data availability ultimately define which way is the fastest way to commercialization, while maintaining a competitive edge over existing solutions and end-user trust in reliability.

Key hurdles before the robotics “ChatGPT moment”’

Combining all of this for a functioning robotic system is far from simple. Firstly, most robotics companies face fundamental data challenges constraining their AI capabilities. Training datasets suffer from inconsistency between controlled collection environments and deployment conditions, where small variations can cause significant performance degradation. For simulations, the simulation-to-reality gap also stems from compounding modeling errors—imperfect physics engines, simplified contact dynamics, and idealized sensor models, for example, create a mismatch between training and real-world data that even sophisticated domain randomization techniques struggle to bridge completely.

Building and improving the AI models is not straight forward either. All models encounter extremely high dimensionality when applied to continuous control problems. Unlike discrete token prediction, robotic planning requires reasoning over continuous trajectories in the configuration space while respecting dynamic constraints, obstacle avoidance, and actuator limitations.

Operating in actual environments is also time critical: robotic systems must process inputs, update world models, and generate commands within tight control loops, often sub-100 milliseconds. Partial observability and non-stationarity in real environments also mean robots must make assumptions about hidden states while adapting to unexpected events, challenges that static foundation models are not naturally equipped to handle. Zero-shot generalization that would be beneficial for novel tasks or environments remains elusive, as robots trained on specific datasets often fail when encountering even minor variations in their environments. Finally, reinforcement learning bring sits own challenges, such as the temporal credit assignment problem—learning which past actions led to a good or bad outcome that happens much later.

The final challenge for robotics companies is system integration and transfer to an actual operational environment. Ultimately, creating a generalist robot, overcoming pre-training needs, or achieving robust human-robot interaction will require understanding the context of different inputs. This means reasoning about what is happening, why it matters, and how to act safely and effectively given all constraints, all at once. Many robotics companies say that they have struggled with the last 5% of reliability more than the first 95%, and this is even more true now with AI and more advanced use cases. Building a seamless bridge between simulation and the real world is key to many of those challenges (“Sim-to-real”).

Ongoing race to build the robotic brain

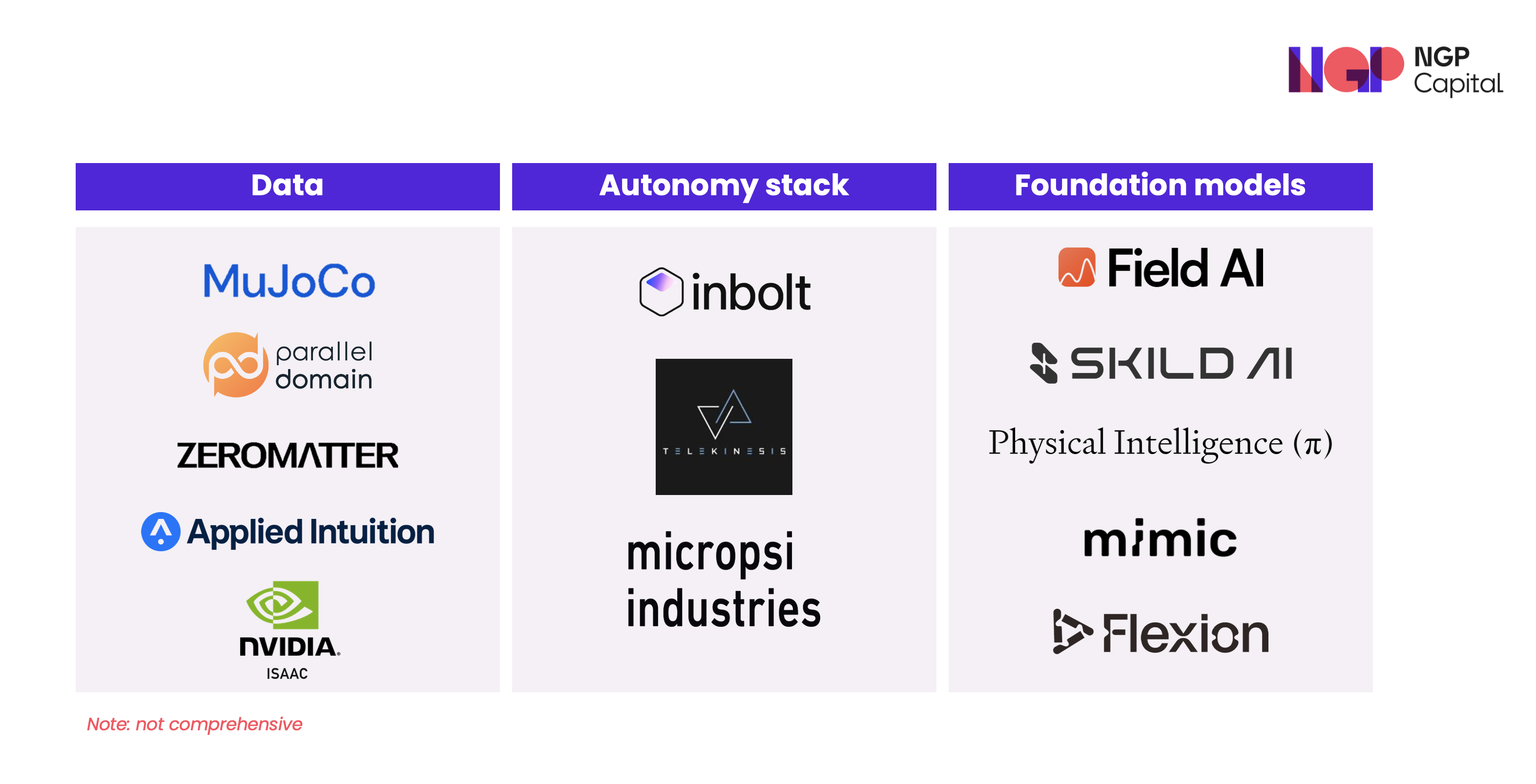

There are many data-focused companies addressing the scarcity and expense of real-world training data. MuJoCo, currently operated by Google DeepMind, has become the go-to physics engine for research, offering fast and accurate contact dynamics crucial for manipulation tasks. Parallel Domain specializes in synthetic data generation for perception systems, creating diverse scenarios and automatic ground truth labeling that would be much more expensive to collect in reality. Zeromatter offers a high-performance simulation platform with sensor-accurate environments, automatic scenario generation, and multiagent co-simulation across sectors like automotive, aerospace, agriculture, and energy, expanding simulation infrastructure beyond robotics.

Sector-focused approaches exist too: Applied Intuition, originally built around autonomous driving, provides an end-to-end toolchain for simulation, data management, and validation that is increasingly used as a referenceinfrastructure for safety-critical autonomous and robotic systems more broadly. Out of the incumbents, NVIDIA's Sim has Isaac emerged as a widely adopted solution, providing photorealistic environments where robots can safely fail millions of times while learning complex behaviors.

Perception-focused companies are solving the critical challenge of making sense of messy, real-world sensory data. Inbolt has carved out a niche in industrial vision, identifying and locating parts in cluttered bins with sub-millimeter precision. Telekinesis combines vision, tactile, and proprioceptive sensing, applicable also to small-batch manufacturing, where robots must adapt to frequent product variations. Micropsi's MIRAI controller adds camera-based adaptive behavior to existing industrial robots, compensating for real-time variances without reprogramming.

The foundation model companies are probably the most talked about in the industry currently. Field AI, SkildAI, and Physical Intelligence have collectively raised well over a billion dollars in funding with valuations overbillions of dollars each while pursuing their vision of general-purpose robotic intelligence. Among the newer European entrants, mimic Robotics focuses on learning from human demonstrations at scale, building models that can learn from watching a human perform a task once, while Flexion Robotics is building foundation models specifically for dexterous manipulation, training on millions of grasping attempts to generalize across object categories.

Our take

Based on what we see today, it is still too early to declare single architectural winners, or whether several players will be needed across the stack. Whether companies pursue highly modular systems or more end-to-end behavior models, ultimately the approaches most likely to win are those that turn these capabilities into tangible customer value: high uptime and reliability, reasonable total cost of ownership, and fast time-to-value with real ROI.

We at NGP are always looking to partner with the best companies in this space. If you are a founder solving a challenge in this complex category, please reach out to Sanni or Christian!

.svg)

.svg)